Unsung Heroes: Bringing Docker to IBM’s “Big Iron”

“Superman” by Julian Fong used by permission (CC BY-SA 2.0)

DockerCon is just over a week away! During DockerCon, I’m going to have the privilege to talk more than once about cool things I’ve gotten to work on over the past couple years. One of those talks will be about our work around the new Docker support for multi-platform content in the Docker v2.3 registry (and engine). But, in thinking about that work and why it’s important to IBM, I had to remember that without a significant amount of work by colleagues who won’t be speaking, and some who won’t even be able to attend, there wouldn’t be so much to talk about! With that in mind, I’d like to take a few minutes to celebrate some pretty  incredible work done by colleagues both inside and outside of IBM to bring the Docker engine and ecosystem to systems that many people don’t ever hear about, much less see or touch, but are critically important to many of IBM’s largest customers: the POWER platform, and the z Systems/LinuxONE mainframe family.

incredible work done by colleagues both inside and outside of IBM to bring the Docker engine and ecosystem to systems that many people don’t ever hear about, much less see or touch, but are critically important to many of IBM’s largest customers: the POWER platform, and the z Systems/LinuxONE mainframe family.

Startup

Divide each difficulty into as many parts as is feasible and necessary to resolve it.

– Rene Descartes

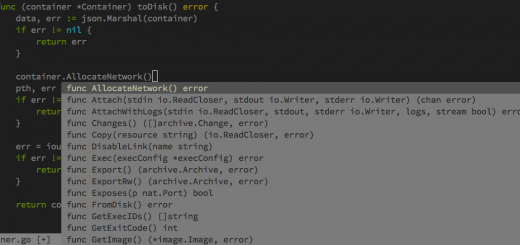

Starting something new is rarely as straightforward as it seems in your mind. In this case, porting a piece of fairly complicated software to a new architecture or platform tends to be full of surprises, mostly not the favorable kind. In the case of Docker, Docker and its underlying libcontainer dependency (now via OCI/runC) are written in Go, and beyond that, have architecture specific linkages at the OS layer. So, before even having a discussion on the Docker engine itself, the question around Go language support for these platforms as well as libcontainer linkages to the architecture had to be answered first.

Seetharami Seelam, a Ph.D Research Staff Member, and long-time expert on IBM’s POWER platform kicked off this work back in 2014, working with Google (Ian Lance Tayor), Canonical (cgo support in concert with James Page), and IBM’s own Linux Technology Center to get Go language support for the POWER platform. Given the long pole of getting official support in native Go, initial work focused on gccgo support, and given IBM’s long-time expertise in gcc for IBM architectures with compiler experts like Lynn Boger, it seemed like the best and quickest path to compiling Go code for our platforms. Of  course, keeping a fast-paced developing language like Go supported at the level needed for Docker caused a lot of churn in our gccgo work: chasing bugs and adding missing features. Lynn and others worked against all odds to make gccgo a viable solution, in parallel helping drive upstream Golang support, culminating with a “roughly feature complete” ppc64le architecture support announcement in Go 1.6! Docker now builds with the standard Go 1.6.x compiler for POWER, and many people can breathe a sigh of relief at chasing more updates and changes to gccgo. Of course, even a Ph.D. IBM researcher needs some support from executive level sponsorship, and two Distinguished Engineers at IBM, Bruce Anthony and Dipankar Sarma, enabled and championed this work across IBM’s leadership, helping to acquire hardware, handle documents requiring sign off, and allocating people to the work. Work continues on the “s390x” (the Linux CPU architecture moniker for 64-bit z Systems) official Golang support–it will be in experimental state when Go 1.7 releases in a few months–but until then, gccgo continues to be used to build for z Systems/LinuxONE. It’s important to recognize Dominik Vogt and his early work enabling gccgo support for s390x, as without that initial porting effort gccgo would not have been available for our z Systems.

course, keeping a fast-paced developing language like Go supported at the level needed for Docker caused a lot of churn in our gccgo work: chasing bugs and adding missing features. Lynn and others worked against all odds to make gccgo a viable solution, in parallel helping drive upstream Golang support, culminating with a “roughly feature complete” ppc64le architecture support announcement in Go 1.6! Docker now builds with the standard Go 1.6.x compiler for POWER, and many people can breathe a sigh of relief at chasing more updates and changes to gccgo. Of course, even a Ph.D. IBM researcher needs some support from executive level sponsorship, and two Distinguished Engineers at IBM, Bruce Anthony and Dipankar Sarma, enabled and championed this work across IBM’s leadership, helping to acquire hardware, handle documents requiring sign off, and allocating people to the work. Work continues on the “s390x” (the Linux CPU architecture moniker for 64-bit z Systems) official Golang support–it will be in experimental state when Go 1.7 releases in a few months–but until then, gccgo continues to be used to build for z Systems/LinuxONE. It’s important to recognize Dominik Vogt and his early work enabling gccgo support for s390x, as without that initial porting effort gccgo would not have been available for our z Systems.

As Seelam got deeper into the porting effort, he realized this would be a significant task, and enlisted the help of three IBM Tokyo Research Lab members to help work on the libcontainer and Docker porting pieces. Yohei Ueda, Tatsushi Inagaki, and Kazunori Ogata accomplished a significant amount of the early work, sometimes with very small patches that were huge steps of forward progress to get Docker compiling for both POWER and z Systems (named “s390x” in Linux CPU architecture vernacular). Given the work across Go language support, both official upstream support as well as support in gccgo, and the porting effort in the engine and libcontainer, progress for both platforms was finally well underway. As an aside, sometimes seemingly inconsequential events have considerable impact much later. In discussing this project history Seelam mentioned to me that David Edelsohn from IBM had worked hard years ago–long before something called Docker even existed–to get Go officially supported in the gcc community, and without that work, including hammering out copyright and other issues with Ian Taylor from Google, the work on Docker many years later to use gccgo would not have even been possible!

Connecting the Dots

Getting something to compile the first time is an exciting moment if you’ve ever done a significant porting effort. The euphoria doesn’t last long in a fast-moving open source project: a particular PR gets merged, and you are broken again due to some nuance that conflicts with your newly compiled platform! There are a long list of unsung heroes who took this early work from the Systems, Research and compiler teams and hammered on bugs and re-works until they were able to solve various difficult issues, get all integration tests passing, and coordinate adding IBM hardware into the CI pipeline for the Docker project. Srini Brahmaroutu from my IBM Open Technologies team carried the leadership on this effort for what I’m sure seemed like an eternity! I’m sure he was dreading the question each week on our internal status call: “So, how’s the porting effort going for POWER and z?” He profited from very capable help in those early days from Jessie Frazelle and Mike Dougherty at Docker to add our hardware/VMs into the Jenkins CI configuration as well as all the necessary steps to set up accounts and admin privileges to allow IBM to debug and administrate these systems. It was a momentous day when finally the Dockerfiles for these architectures were merged into master. Christy Perez from our Linux Technology Center also joined the effort and effectively handled the POWER VM setup work for CI (which ended up with many early complications, drawing out the work longer than originally expected!), with help from Jenifer Hopper from the POWER performance team to make sure the systems were properly tuned. Since that initial trial by fire, Christy has jumped into fixing compilation problems, solving hard-to-find bugs (with Michael Chase-Salerno in this case), debugging potential upstream issues, adding missing syscalls to Golang (with Lynn B. noted above) and anywhere else changes were needed to make Docker compile, test, and run!

As Christy had her hands full with that ongoing work, Chris Jones joined the cause (more recognizable as tophj-ibm on GitHub) and has doggedly worked on CI failures for both POWER and z platforms, as well as working on getting features like seccomp working across the new architectures, with the addition of knowledgeable help from Justin Cormack at Docker. Many of these hard-working IBM Linux Technology Center colleagues were led by Pradipta Banerjee, the worldwide container team lead for the LTC, who helped coordinate this work across the various teams.

For the z Systems work, much of IBM’s expertise on the Linux software side of our mainframe business exists at the IBM development lab in Boeblingen, Germany. Two notable developers from that team have worked on specific z Systems efforts: Utz Bacher and Michael Holzheu. Utz has been championing the work to bring Docker to z Systems for quite some time, and his blog and efforts with distros and packaging have been key to this effort. Michael has joined Christy and Chris on the CI and other Docker work to keep z building smoothly in the latest master builds of the engine, including his analogous work to Chris’s on seccomp for z Systems. In addition to Lynn’s work on the compiler side, Michael Munday from our Toronto compiler group also labored to make sure all the loose ends are resolved with upstream Go support for the platform.

Final Notes

You can see that this porting effort was no small undertaking. The names above represent about 8 or 9 different timezones around the globe, several continents, four distinct divisions of IBM, and multiple native languages! But, the outcome is a pretty impressive and cohesive piece of work that represents all ends of the spectrum: from head-scratching issues, late night debug sessions, cross-geography conference calls, frustrations, all the way to considerable elation that it all just works. The combined work of all the people mentioned above represents literally hundreds of PRs and issues across Docker, libcontainer, Golang, opencontainers/runc, and other related projects. Deepest thanks to everyone who participated in this effort, and I’m proud of the worldwide IBM team who came together to make this a reality.

At this point, some might consider that this was merely an IBM internal effort to benefit IBM system platforms. Of course enablement of our platforms is a reasonable goal, and something we’ve done with many software platforms over the years. However, IBM has a long history of getting involved in open source not only for our own benefit but for the benefit of the broader community. For an example: our Linux Technology Center, established in the early days of corporate involvement in Linux, had as one of its main values “Make Linux Better.” Along the way our efforts to enable our platforms in the Docker community has most definitely had positive impact on other platform porting efforts in the ARM community and at Microsoft (and vice versa, of course), including the multi-platform registry support work that I’ll be presenting at DockerCon. IBM’s long history with high-end systems as it relates to performance and scalability means we have also tried to test the limits of the Docker engine, and along the way have submitted patches and fixes to the Linux kernel and Docker communities to make sure that scalability isn’t limited artificially in ways that we could fix or improve for all platforms. While this blog post is a story focused on the platform porting efforts, we’ve been busy overall in Docker working on features like user namespaces, fixing hundreds of bugs, improving documentation, and everything in-between! In summary, we hope that the investment we’ve made in Docker is not only for ourselves, but as a valued community effort that has enabled better performance and capabilities for all consumers of the platform.

Postscript

One of our main goals in making a serious investment like this was to make a Docker’s host architecture irrelevant to the user. We want a Docker engine on POWER or z Systems or x86_64 or any other enabled architecture to be usable without the user having to modify their workflow. Much of IBM’s “Open by Design” philosophy relies on tenets like workload portability across clouds and system architectures. In many ways we are now at the cusp of that being a reality with the final few PRs and features being resolved upstream. This leads us to a final lynchpin in the discussion around images and full multi-platform support in image registries both public and private.

If you’ve used Docker at all, you know that the standard images on DockerHub are one of the key attractions of the overall platform. So, it wasn’t enough just to build a Docker engine for these new platforms; we needed the images as well. Many in the community know that Tianon Gravi and others from his team are external maintainers of the official images repository in DockerHub. With the leadership of Gerrit Huizenga and several IBMers who helped create the proper Dockerfiles, and Canonical and others providing some base distro images for these architectures, Tianon helped create, build, and manage the s390x and ppc64le repositories in DockerHub which can be used today by consumers of the Docker engine ported to POWER and z Systems. Come to my talk if you’re at DockerCon and we’ll discuss what we see as the final step in making sure that the “Docker is Docker is Docker” experience is a reality!

Related Content

- Read my preview of IBM’s activity at DockerCon Seattle 2016 on our IBM OpenTech blog.

- My blog post describing the multi-platform support in Docker registry and engine which will be detailed and demonstrated in this talk at DockerCon.

- Docker for z Systems: developerWorks.

- Docker for Linux on Power systems: developerWorks.

The are another 40 containers ready for use on z under the brunswickheads account on dockerhub.com including mariadb, spark, R, openchain, and MEAN.